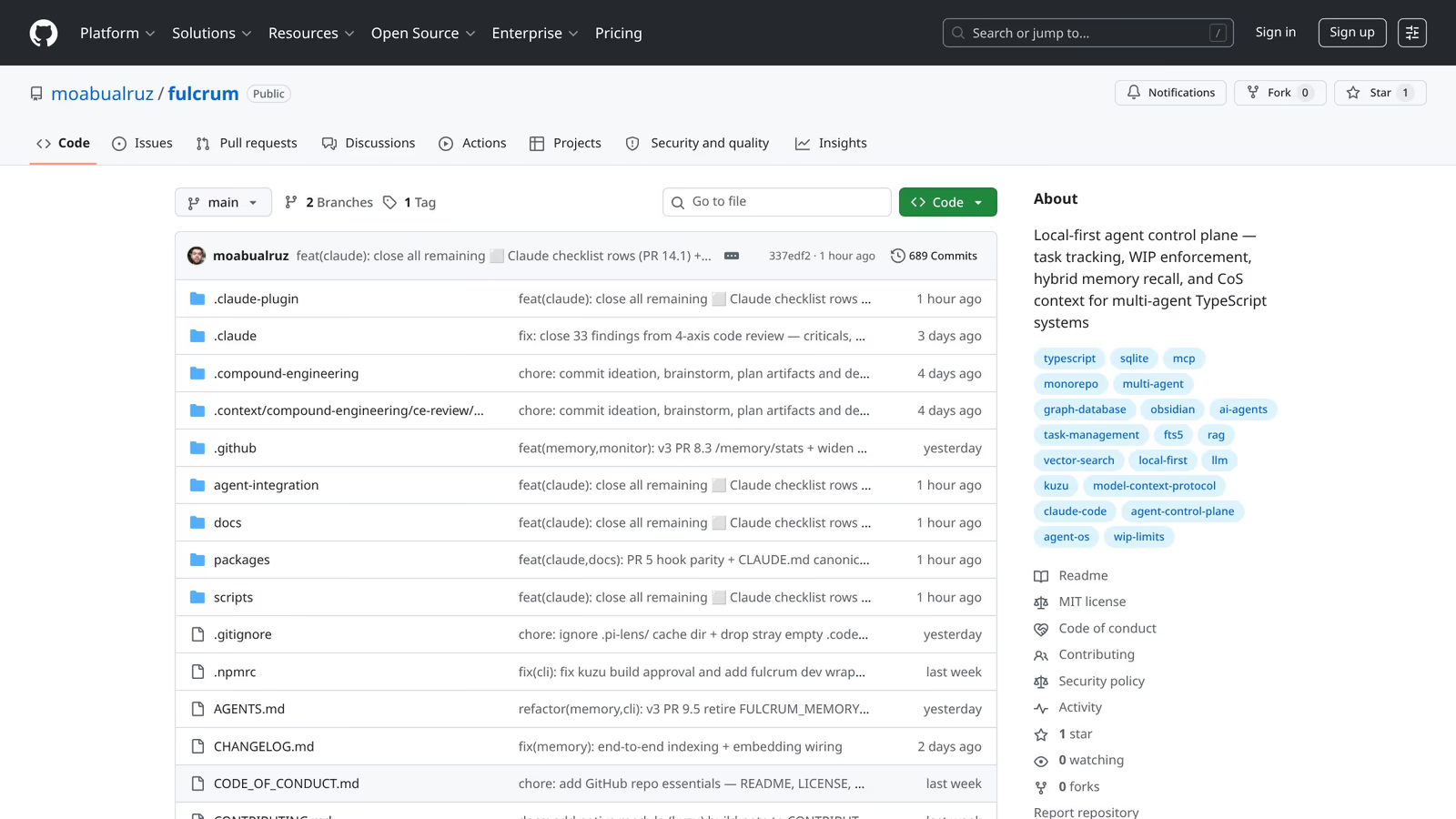

Fulcrum: a local-first agent control plane

Task tracking, WIP enforcement, hybrid memory recall, and Chief-of-Staff context for fleets of parallel AI coding agents.

I run five AI coding agents in parallel on most working days. Claude Code, Codex, Gemini CLI, Pi, OpenCode — each one capable, each one completely ignorant of what the others are doing. Before Fulcrum, my “orchestration layer” was a sticky note and a prayer. That is not hyperbole. There was a literal sticky note.

Why it exists

The problem isn’t that individual agents are bad. They’re remarkable. The problem is the gap between a single productive agent session and a multi-agent workflow that doesn’t melt your brain to manage.

Every vendor framework I evaluated was either locked to one provider (hard no), designed for stateless request-response loops (useless for sessions that span hours), or required a cloud backend I didn’t trust with my work-in-progress context. I wanted something that ran locally, survived a laptop restart, and didn’t require me to explain my entire project history from scratch every time I opened a new terminal tab.

So I built the thing I wanted to use. It’s called Fulcrum because the agent is the lever; the control plane is what determines where the leverage actually lands.

How it works

Fulcrum exposes an MCP server — the Model Context Protocol that Anthropic ships as the standard tool-calling surface for Claude Code. Every agent that connects gets access to the same set of primitives: task management, run lifecycle tracking, and a hybrid memory store.

The storage layer is SQLite with FTS5 for full-text search, augmented with a vector index for semantic retrieval. When an agent wants to recall something — “what did we decide about the auth schema last Tuesday?” — Fulcrum runs a three-stage retrieval: FTS5 keyword match, vector cosine similarity, and a graph traversal across entities mentioned in the query. The results are fused via weighted Reciprocal Rank Fusion and filtered by confidence floor before anything hits the agent context window.

Memory has three tiers. L0 is raw dumps — verbatim, immutable, append-only. L1 is curated wiki pages that an LLM curator maintains with confidence scores, retention tiers, and back-references to their L0 sources. L2 is the vector index over L1. When you ask Fulcrum to recall something, you get L1 pages with L0 provenance attached, so you can always trace a claim to the raw session transcript that generated it.

Task management enforces WIP limits. If your team is deep in five things and someone tries to start a sixth, Fulcrum pushes back. This isn’t precious — it’s the single most effective thing I’ve found for preventing agent context fragmentation. An agent that’s juggling twelve open tasks produces worse output than one that’s focused on two.

The Chief-of-Staff role is the orchestration tier: it builds world-state snapshots from active tasks, running agents, and recent events, then uses those snapshots to dispatch work to specialist roles. COS cannot write code. Specialist roles cannot spawn sub-orchestration. The role hierarchy is enforced at the tool layer, not the prompt layer.

What’s interesting

The thing that surprised me most during development: the hardest part wasn’t the memory retrieval. It was the run lifecycle.

Agents crash. Terminals close. Laptop lids go down. A run that was “in progress” two hours ago may never complete its complete_agent_run call. Fulcrum has a stale-run sweeper that runs at session start and aborts any run whose last heartbeat is older than the staleness threshold. Without that, the workspace state fills up with phantom running agents and your Chief-of-Staff starts making planning decisions based on ghosts.

The other non-obvious thing: making memory write paths idempotent is much harder than it sounds when you have five concurrent agents all potentially capturing the same architectural decision from slightly different angles. The curator’s job is to deduplicate, supersede, and maintain a coherent L1 surface — which means the raw L0 layer needs to tolerate high write volume and the curation pass needs to handle conflict resolution gracefully.

I’ve started thinking of the memory layer less like a database and more like a newspaper archive: the raw dumps are the daily editions (never throw them out), the curated pages are the encyclopedia (updated when new evidence arrives), and the confidence score is the editorial consensus on whether a claim has held up over time.

What I’d change

The vector index is currently per-workspace, and that’s a mistake. Cross-workspace recall — “I solved a similar problem in the payments service repo last month” — would be genuinely useful and the retrieval plumbing is already there. I just haven’t wired the scope correctly.

I also want to harden the curator’s conflict resolution logic. Right now, if two agents capture contradictory information about the same entity, the curator picks the higher-confidence version. That works fine in practice, but it silently discards the minority view. The right behavior is probably to surface the contradiction as a flagged pair and let a human or the COS resolve it explicitly.

The dispatch path — where COS actually spawns a Claude Code subprocess for a specialist role — is fire-and-forget right now. I want proper stdout streaming so the COS can observe what’s happening and intervene if a run is going sideways, without having to wait for the heartbeat to go cold.

Fulcrum is the piece of infrastructure I wish had existed when I started running agents seriously. Building it taught me that multi-agent orchestration is mostly a distributed-systems problem with a thin layer of LLM-specific concerns on top, and the distributed-systems problems are the ones that will bite you first.